In every IT environment, servers, applications, and devices continuously generate event data. Without a way to organize it, these logs pile up fast and make it hard to spot problems, keep systems running smoothly, or stay compliant with regulations. Log management consolidates all this data, making it easier to collect, store, analyze, and monitor from a single location.

The scale is massive. In 2023, enterprises processed over 12.5 billion log events every day from their systems and networks. The global log management market reflects this demand, valued at about USD 935.6 million in 2024 and expected to double to USD 1.7 billion by 2033. Organizations rely on log management tools to cut through noise, troubleshoot quickly, detect security risks, stay compliant, and reduce manual effort with automation.

The best tools don’t just handle logs; they simplify the entire process. They centralize data, provide real-time insights, and trigger alerts before minor issues become major problems. This translates into stronger security, smoother operations, and fewer compliance headaches.

Log management tools can help your organization avoid the following pain points:

- Lack of Centralized Visibility: They collect and normalize logs from all systems and applications into one searchable location.

- Slow Incident Detection and Response: Log management software minimizes blind-spot-related challenges, and you can easily see what’s happening across your network. Your team would be able to spot anomalies and security events faster.

- Audit and Compliance Difficulties: Centralized logs with timestamps and event context provide the evidence needed for regulatory compliance and audit reporting.

- High Operational Burden: Automated log collection and processing reduce the manual effort required to manage large volumes of log data. Your IT and security teams would then be free to focus on analysis and response instead of data wrangling.

- Inefficient Troubleshooting: When logs are scattered across systems, finding the root cause of an issue can take a long time. Log management software consolidates everything in one place and makes searching easier.

- Inadequate Forensic Evidence: Comprehensive log storage and indexing ensure that detailed historical data is available for post-incident analysis.

In this article, we’ll break down the leading log management tools and what makes them stand out.

Here’s our list of the best log management tools:

- FirstWave opEvents – Best for network-centric IT ops and NOCs with SNMP/syslog focus.

- ManageEngine Log360 – Best for mid-to-large enterprises needing security and compliance.

- Site24x7 Log Management – Best for IT/DevOps teams in cloud/hybrid environments.

- Splunk – Best for large enterprises and regulated industries with high-scale needs.

- Elastic Stack – Best for skilled DevOps teams wanting customizable open-source solutions.

- Datadog Log Management – Best for cloud-first DevOps and distributed systems teams.

- Sumo Logic – Best for cloud-native and SaaS companies with large data volumes.

- Logz.io – Best for teams seeking simplified ELK with ML anomaly detection.

If you need to know more, explore our vendor highlight section just below, or skip to our detailed vendor reviews.

Βest log management tools highlights

Top Feature

Real time log collection correlation and compliance reporting for security teams

Price

Starts at US$2,035 based on minimum requirements

Target Market

Organizations that value compliance security visibility and forensic log analysis

Free Trial Length

30-day free trial

Additional Benefits:

- Speeds threat detection by correlating related events across systems

- Supports investigations with searchable historical logs and raw event detail

- Helps meet audit needs with compliance ready reports across standards

- Improves visibility with centralized logs dashboards charts and alerts

Features:

- Centralized log collection from servers devices endpoints apps and cloud sources

- Real time log analysis for immediate event visibility

- Event correlation to link related events and reduce alert noise

- Threat detection and alerting for predefined or custom conditions

- Compliance reporting mapped to PCI DSS HIPAA SOX GDPR and ISO 27001

Top Feature

Integrated event and trap monitoring tied to network performance visibility

Price

Infrastructure monitoring starts at $1,229 for 50 devices per year

Target Market

SMBs and IT or network teams needing basic event monitoring SNMP traps and alerts

Free Trial Length

Free trial available, duration not disclosed by the vendor

Read more ▼

Top Feature

Elasticsearch based log management with open source entry and paid upgrade path

Price

Starts at $15,000 per year

Target Market

Mid-sized to large organizations needing a powerful budget friendly log tool

Free Trial Length

Free plan available

Read more ▼

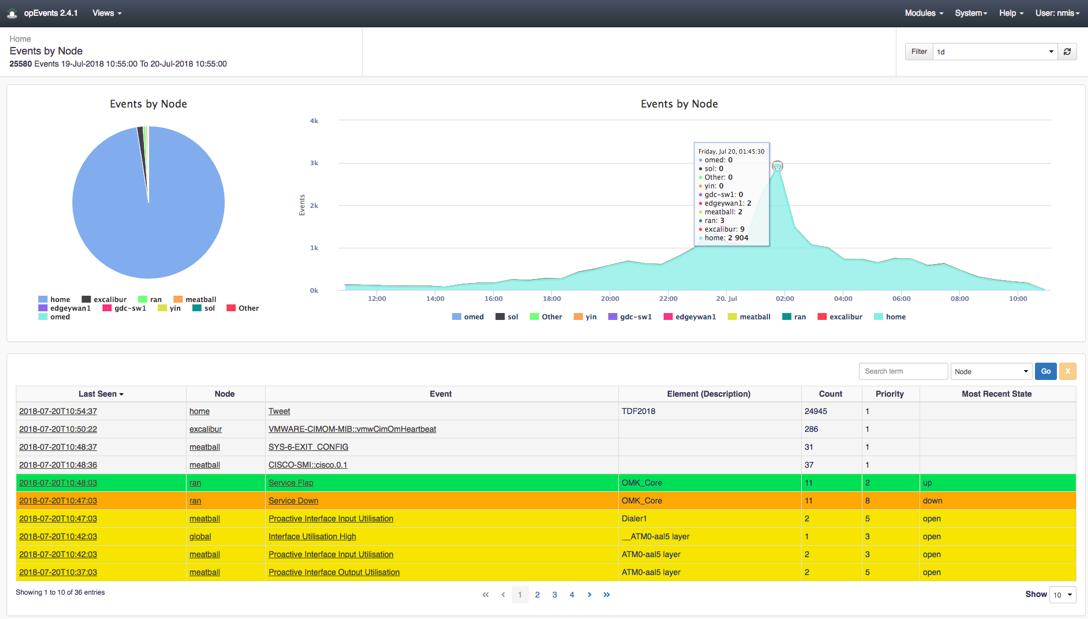

Top Feature

Real time event collection enrichment and correlation for network centric operations

Price

Negotiated pricing

Target Market

NOC teams needing real time visibility into events syslog messages and SNMP traps

Free Trial Length

Free time unlimited license for up to 20 nodes, time limited trials also available

Read more ▼

Top Feature

Unified SIEM with real time log correlation UEBA and compliance reporting

Price

Starts at $120 per year

Target Market

Organizations needing centralized log collection real time security monitoring and compliance reporting

Free Trial Length

30-day free trial

Read more ▼

Top Feature

Cloud native log analytics integrated with observability across distributed systems

Price

Professional plan starts at $42 per month paid annually

Target Market

IT operations and security teams in cloud hybrid or distributed environments

Free Trial Length

30-day free trial

Read more ▼

Top Feature

Indexes and searches machine data at enterprise scale for security and observability

Price

Negotiated pricing

Target Market

Big companies, government agencies, and regulated industries

Free Trial Length

14-day free trial

Read more ▼

Top Feature

Unifies observability and security data with scalable log search and visualization

Price

Negotiated pricing

Target Market

Organizations with skilled IT teams that require observability and security on a single platform

Free Trial Length

14-day free trial

Read more ▼

Top Feature

Decouples log ingestion from indexing for flexible cost control at scale

Price

Log ingestion starts at $0.10 per GB

Target Market

Cloud-based organizations, DevOps teams, and large companies with distributed systems

Free Trial Length

14-day free trial

Read more ▼

Top Feature

Cloud-native log analytics with scalable ingestion and real-time analysis

Price

Negotiated pricing

Target Market

Cloud-first companies, SaaS providers, digital businesses, and enterprises with distributed systems

Free Trial Length

30-day free trial

Read more ▼

Top Feature

Managed cloud log analytics with AI insights and cost optimization controls

Price

Open 360 logging ingestion starts at $0.10 per GB of log data ingested

Target Market

Cloud-first or hybrid organizations that generate large amounts of log data

Free Trial Length

14-day free trial

Read more ▼

Key points to consider before purchasing a log management tool

When you’re considering a log management solution for your organization, here are the key factors you need to weigh:

- Deployment model: Decide between cloud-based SaaS for flexibility and speed or on-premises for stricter control, compliance, or data residency needs.

- Scalability: Ensure the tool can handle exponential log growth from cloud, IoT, and containerized workloads without performance degradation.

- Cost and total ownership: Compare commercial licensing fees with the hidden staffing and maintenance costs of open-source options.

- Core features: Prioritize real-time search for troubleshooting, advanced security analytics, automated threat detection, and compliance-ready reporting.

- Integration: Confirm the solution connects seamlessly with SIEM platforms, monitoring tools, cloud services, and your existing infrastructure.

- Retention and compliance: Verify configurable storage policies and audit trails to ensure compliance with industry regulations and legal requirements.

- Data security: Look for encryption in transit and at rest, role-based access controls, and secure log forwarding.

- Ease of use: Dashboards, search functions, and workflows should minimize training requirements and accelerate adoption.

- Vendor support and ecosystem: Assess service-level commitments, documentation quality, and the strength of the community or partner ecosystem.

To dive deeper into how we incorporate these into our research and review methodology, skip to our detailed methodology section.

Different Log Formats and Standardization

Logs come in a variety of formats depending on their source. For instance, web servers may produce logs in formats like W3C Extended or Apache logs, while network devices use Syslog, and applications often generate custom-formatted logs. These differences can complicate log analysis, especially in environments with diverse systems.

Log management tools address this by converting logs into a standardized format, enabling seamless analysis and correlation across different systems. This uniformity is vital for gaining comprehensive insights into system behavior and identifying anomalies.

Filing, Archiving, and Security Applications

Once collected, logs must be organized and archived systematically. Filing logs by source, date, or category ensures they can be retrieved quickly when needed. Archiving is particularly important for compliance purposes, as many regulations require organizations to retain logs for extended periods.

Logs are also instrumental in security scanning. Log management tools can analyze log data in real time to detect suspicious activities, such as unauthorized access attempts or unusual traffic patterns. They often integrate with SIEM (Security Information and Event Management) systems to provide alerts and insights into potential threats.

Cost and Accessibility

Log management tools vary in cost and accessibility. Many vendors offer free tools with basic features suitable for smaller environments, while enterprise-grade solutions often include free trials, allowing organizations to evaluate their capabilities. Selecting the right tool depends on your organization’s size, budget, and specific requirements.

Once you find a log management tool that you like, you will grow to be dependent on it for a range of admin tasks, including Security Information and Event Management (SIEM) and real-time log monitoring of your network and its equipment. If your favorite tool goes out of production, you will need to find a replacement quickly to enable you to continue to manage event logs and sort through all of your log data.

The best log management tools for Windows, Linux, and Mac

1. ManageEngine EventLog Analyzer (FREE TRIAL)

Best For: Organizations that value compliance, security visibility, and forensic log analysis

Price: Starts at at US$2,035 based on minimum requirements

ManageEngine EventLog Analyzer is a log management and SIEM solution that collects and analyzes logs from servers, network devices, endpoints, applications, and cloud sources. It correlates these logs and events to help organizations detect threats, investigate incidents, and meet compliance requirements.

EventLog Analyzer is a focused log management and SIEM. Its job is to collect, correlate, and analyze logs to support threat detection, incident investigation, and compliance reporting. It is typically used by security teams that need clear answers to who did what and when, as well as expose any policy violations.

ManageEngine EventLog Analyzer can be deployed both on-premises and in the cloud, depending on your needs. It supports traditional on-site installations on Windows or Linux servers. ManageEngine also offers a cloud-based deployment option for organizations that prefer SaaS-style log management and reduced infrastructure overhead.

ManageEngine EventLog Analyzer Key Features:

- Centralized Log Collection: Gathers logs from servers, network devices, firewalls, endpoints, applications, and cloud services into a unified repository.

- Real‑Time Log Analysis: Continuously processes incoming logs and provides immediate visibility into events as they occur.

- Event Correlation: Links related log events to identify patterns, spot suspicious activity, and reduce noise from isolated alerts.

- Threat Detection & Alerting: Generates real‑time alerts for predefined or custom security and operational conditions to support rapid response.

- Compliance Reporting: Offers a broad library of audit‑ready reports mapped to regulations such as PCI DSS, HIPAA, SOX, GDPR, and ISO 27001.

- Audit Trails & Tamper‑Proof Storage: Stores logs in a secure, tamper‑resistant manner with time‑stamped audit trails for accountability and evidence.

- Dashboards & Visualization: Provides customizable dashboards, charts, and summaries that give quick situational awareness for security and operations.

Unique Buying Proposition

The unique buying proposition of ManageEngine EventLog Analyzer as a log management tool is its real-time log collection, correlation, and comprehensive compliance reporting.

It aggregates and analyzes logs from various sources and automatically correlates events to detect security incidents, insider threats, and policy violations. It also provides audit-ready reports for multiple regulatory standards.

However, it may require more manual configuration and fine-tuning compared to fully cloud-native or AI-driven log management platforms.

Feature-In-Focus: Log Correlation and Event Analysis

Real‑time log correlation and security‑oriented event analysis transform raw log data from diverse sources into actionable insights for threat detection, behavioral monitoring, and compliance auditing. With this capability, you can easily spot suspicious activity, correlate events across systems, and generate audit‑ready reports for your organization.

Why do we recommend ManageEngine EventLog Analyzer?

We recommend ManageEngine EventLog Analyzer because it delivers deep, actionable log visibility with far less complexity than many traditional SIEM platforms. Its real strength is in how quickly it turns raw log data into meaningful insights through built-in correlation rules, real-time alerting, and extensive preconfigured compliance reports.

Its specialized Universal Log Parsing and Indexing (ULPI) architecture provides the forensic agility often missing in standard log collectors. It also employs a sophisticated normalization engine that deciphers over 700 disparate log formats from AWS/Azure cloud trails to legacy on-premise syslogs.

Who is ManageEngine EventLog Analyzer recommended for?

We recommend ManageEngine EventLog Analyzer for organizations where compliance, security visibility, and forensic log analysis are priorities. It is also suitable for security teams that need clear answers to who did what and when.

Pros:

- Real‑time monitoring and correlation: Detects suspicious activity quickly by linking related events across systems.

- Centralized log collection: Collects logs from servers, network devices, endpoints, applications, and cloud sources into one platform.

- Forensic investigation support: Allows detailed searching and filtering of historical logs for incident analysis.

- Customizable alerts and workflows: Generates alerts and can trigger automated responses or integrate with help desks and SIEM workflows.

Cons:

- Less advanced automation: Lacks some of the built‑in AI/ML‑driven automation and anomaly detection found in newer cloud‑native log platforms.

ManageEngine EventLog analyzer is licensed based on the number of log sources (devices, applications, Windows servers, and workstations, the number of Endpoints (Windows Workstation), and the number of Cloud Accounts (AWS Accounts, Microsoft 365 Tenants).

The Minimum number of Log Sources and endpoints should be 10 and 100, respectively. You’ll need to contact sales if you wish to generate quotes for a value below that. Pricing begins at US$2,035, calculated on the minimum number of log sources and endpoints and a single cloud account. A 30-day free trial is available.

2. Progress WhatsUp Gold (FREE TRIAL)

Best For: SMBs and IT or network teams that need basic event monitoring, SNMP trap management, and alerts.

Price: Infrastructure monitoring starts at $1,229 for 50 devices, per year

Progress WhatsUp Gold is a network monitoring and management solution. It provides real-time network discovery, performance monitoring, alerting, and reporting, along with features such as traffic analysis, configuration management, and fault detection to help you identify and resolve issues early.

Although Progress WhatsUp Gold is not a dedicated log management tool in the actual sense. However, it can collect basic system events, SNMP traps, and alerts from network devices, generate alerts based on monitored events and thresholds, and report on performance metrics and historical trends.

The best use case for this functionality is day-to-day network operations and infrastructure monitoring, where the goal is fast detection and response to availability or performance issues. It provides continuous, AI-driven detection of advanced threats across hybrid-cloud networks.

If you have WhatsUp Gold already deployed in your organization, you do not need a full log management platform to gain visibility into the health of your devices and health and operational events. WhatsUp Gold can do that for you.

Progress WhatsUp Gold Key Features:

- Event and Trap Collection: Captures basic system events, SNMP traps, and device alerts from network hardware and servers.

- Threshold-Based Alerting: Generates notifications when monitored metrics (e.g., interface status, CPU/memory thresholds) cross defined limits.

- Event Correlation (Basic): Groups related events into meaningful alerts to reduce noise and highlight actionable incidents.

- Performance Reporting: Produces historical trend reports that include events and alerts alongside performance metrics for context.

- Dashboard Visibility: Displays recent events and alert statuses in intuitive dashboards to help teams spot issues quickly.

Unique Buying Proposition

WhatsUp Gold provides simple, integrated event and trap awareness directly tied to network performance. This is unique in that it blends event collection with performance monitoring, delivers immediate, context-rich alerts based on network events and thresholds, and reduces operational overhead. You do not need a dedicated log platform or SIEM just to know when a router flaps, a key interface goes down, or a device hits a CPU threshold. WhatsUp Gold captures all that for you.

Why do we recommend Progress WhatsUp Gold?

If your priority is network uptime and performance alerts with some event context, WhatsUp Gold delivers value. However, if you need comprehensive log analytics and security auditing, a dedicated log management tool is a better fit.

Who is Progress WhatsUp Gold recommended for?

WhatsUp Gold is best suited for SMBs, IT teams, and network operations teams that need basic event visibility, SNMP trap handling, and alerting tied to network health, not full log analytics or SIEM capabilities.

It works well in environments where the goal is to quickly detect device events such as interface up/down, resource threshold breaches, or hardware faults and respond to them in real time.

Pros:

- Real-time visibility: Collects and displays event data so teams can spot network anomalies and failures immediately.

- Integrated with monitoring: Event/trap data is tied directly to performance metrics and availability checks, giving context around issues.

- Simple alerting: Threshold-based alerts cut through noise and notify teams when key conditions occur without manual log review.

- Low operational overhead: Setup and maintenance are easier than full log-centric tools, making it suitable for smaller teams focused on network uptime.

Cons:

- Basic alert logic: Threshold alerts are useful but can generate noise if thresholds aren’t tuned; there’s no behavior-based alerting tied to user or security contexts.

WhatsUp Gold is available in several editions to suit different network sizes and needs. The options include Business, Enterprise, Enterprise Plus, and Enterprise Scale. The Enterprise Scale is available through custom pricing.

Pricing is generally based on the number of devices monitored. Licensing is available in both annual subscription and perpetual (one-time purchase) models. Subscription licenses include updates and support for the duration of the term and are billed annually.

WhatsUp Gold is primarily deployed on-premises and can be extended with optional add-on modules for areas like log-related event monitoring, configuration management, and traffic analysis. This flexibility allows you to choose a plan that matches your budget, deployment preferences, and monitoring depth. A 30-day free trial is available upon request.

3. Graylog (FREE PLAN)

Best For: Mid-sized to large organizations that need a powerful but budget-friendly log management tool

Price: Starts at $15,000/yr

Graylog is an open-source log management platform that collects, stores, and analyzes log data from a wide range of sources. It uses Elasticsearch for indexing and searching logs, MongoDB for storing metadata and configurations, and its own Graylog server for processing and managing log messages.

Graylog can be deployed on-premises or in the cloud. It’s often chosen by mid-sized businesses, enterprises with compliance needs, and technical teams that want the power of Elasticsearch but with a friendlier interface and management layer on top.

Although Graylog is open source, not all of it is free. Here’s the breakdown:

- Graylog Open: This is the free, open-source edition, licensed under the GNU GPL v3.

- Graylog Enterprise: A paid version that adds advanced features like archive support, reporting, user audit logs, and enterprise integrations.

- Graylog Cloud: A subscription-based, fully managed SaaS version hosted by Graylog.

Graylog Key Features:

- Elasticsearch-backed search engine: Fast, distributed log indexing and querying built on Elasticsearch but simplified with Graylog’s UI.

- Stream-based log processing: Let’s teams route, filter, and tag log messages in real time for better organization and faster incident response.

- Role-based access control (RBAC): Provides fine-grained permissions so only the right users can view or manage specific log data.

- Alerting with condition-based rules: Triggers notifications via email, Slack, or external integrations when log events meet specified thresholds.

- Archiving and retention management: The Enterprise edition provides structured log storage for compliance and historical analysis.

Unique Buying Proposition

Graylog’s biggest advantage is its robust log management features that come at a lower cost than many enterprise tools. It’s built on strong technology (like Elasticsearch) but adds a user-friendly interface that makes it easier for teams to search logs, create dashboards, and set up alerts with minimal technical expertise.

It’s more affordable than Splunk and less complicated to run than Elastic, yet still powerful enough for enterprises. That uniqueness in cost savings, usability, and flexibility is what makes it appealing to many organizations.

Feature-In-Focus: Log Collection and Structured Log Analysis

Log collection and structured log analysis features help your organization ingest logs from many sources and normalize them into a consistent format. Your security or audit teams can then search, filter, and trigger alerts in real time to support troubleshooting, security monitoring, and compliance.

Why do we recommend Graylog?

Graylog earns its spot among the top log management tools because it solves a real-world organizational problem in log management:

- The ability to see, search, and analyze logs from many systems, servers, and applications in real time, even when data volumes are very high.

- You don’t need a big team of specialists to set it up and manage it

- It keeps costs lower, especially with its open-source option.

In 2024, it won the Gold Award for Central Log Management at the Cybersecurity Excellence Awards. It was also named a Leader in the 2025 GigaOm Radar Report for SIEM. That success reflects an ongoing commitment to delivering log management with strong security capabilities.

Who is Graylog recommended for?

Graylog is best suited for mid-sized to large organizations that need a budget-friendly but powerful log management and SIEM capabilities. Its open-source base appeals to companies that want flexibility and control. The enterprise and cloud versions give regulated industries and hybrid setups options to meet compliance and data-residency requirements.

It’s not for organizations that want advanced AI-driven analytics and predictive insights out of the box or for businesses that are fully cloud-native and prefer an exclusively managed service. For these cases, tools like Sumo Logic or Splunk might be better aligned.

Pros:

- Cost efficiency: More affordable than tools like Splunk, especially at a large scale.

- Balanced usability: Delivers strong capabilities without requiring deep Elasticsearch expertise.

- Scalable upgrade path: Scales from open-source to enterprise or cloud editions without changing tools.

- Active community: Benefits from a strong ecosystem of plugins, integrations, and shared knowledge.

Cons:

- Deployment complexity: Setup and tuning can be harder than with fully cloud-native platforms like Sumo Logic.

- Paid feature gating: Advanced features, such as reporting and archiving, are available only in paid editions.

Graylog offers three main pricing tiers: Enterprise, Security, and API Security. The Enterprise plan starts at $15,000 per year and is designed for SecOps, ITOps, and DevOps teams. The Security plan begins at $18,000 per year and focuses on SIEM capabilities. Similarly, API Security starts at $18,000 per year and provides discovery and end-to-end protection for critical APIs.

All plans are cloud-ready and on-premises deployable through the Graylog platform. However, you need to contact sales for pricing and exact licensing based on your team size and usage. No free tier is offered, but enterprise-grade support is included.

4. FirstWave opEvents (FREE PLAN)

Best For: NOCs teams that need real-time visibility into events, syslog messages, and SNMP traps

Price: Not openly published on their website

FirstWave opEvents is a security event management platform that collects, normalizes, and analyzes event and security data from multiple sources. Collected data can be used to detect threats, investigate incidents, and improve overall security visibility. It processes and standardizes disparate log formats so they can be analyzed together.

In other words, opEvents can be used for log‑related event analysis and correlation as part of security monitoring, especially where the goal is threat detection and incident response. However, it is not a full-fledged log management platform in the traditional sense. Comprehensive log storage, deep ad‑hoc querying, and compliance‑centric log auditing are better handled by dedicated log management or SIEM solutions.

opEvents is available as a standalone install or as part of a FirstWave Virtual Machine package. You can download and run independently, but it must be installed on a server alongside the NMIS platform first. Since it’s a separate module with its own installer and database, you can use it for log and event management on its own, as long as NMIS is installed on the same server.

FirstWave opEvents Key Features:

- Unified Event and Log Consolidation: Collects and centralizes event and log data from SYSLOG, SNMP traps, log files, and APIs into a single handler for streamlined troubleshooting.

- Enrichment and Correlation: Enriches raw events with context and correlates related events to reduce noise and present a clear, single-pane-of-glass view.

- Policy-based Alerting: Uses business-aware policies to identify events, enrich log streams, and generate detailed, relevant notifications aligned with operational priorities.

- Automated Event Handling: Automatically resolves or suppresses events based on predefined policies.

- Proactive Event Management: Applies ITIL v3 best practices to organize, manage, and act on events in real time.

- Threat and Fault Detection: Analyzes event logs to surface potential threats and operational issues, supported by intelligent automation for faster response.

Unique Buying Proposition

opEvents’ buying proposition is operational event intelligence, not log analytics. It is best used to manage alerts and events generated from logs and monitoring systems, especially in network-centric environments.

It provides centralized, real-time collection and correlation of events and logs from multiple sources. Correlation is used to deduplicate, suppress, and prioritize events to help you understand what matters right now.

Feature-In-Focus: Real‑time Event Collection, Enrichment, and Correlation

FirstWave opEvents real‑time event collection, enrichment, and correlation engine brings together event and log data from network devices, servers, SNMP traps, syslog streams, and application APIs to present a consolidated, actionable view of operational events and alerts.

Why do we recommend FirstWave opEvents?

We recommend FirstWave opEvents because it works well when you need to collect logs and events from multiple sources, normalize them for analysis, and correlate related activities to identify potential threats or suspicious behavior. You can use it to detect security incidents faster, reduce alert noise, and add context to logs for easier investigation.

Who is FirstWave opEvents recommended for?

We recommend FirstWave opEvents for network-centric IT operations teams. Its ideal market includes small to mid-sized enterprises, service providers, and NOCs that need real-time visibility into events, syslog messages, and SNMP traps. We highly recommend it for environments already using NMIS and focused on network and infrastructure operations.

Pros:

- Strong noise reduction: Correlation and policy-driven enrichment significantly cut alert fatigue compared to raw log collection.

- Network-operations focused: Well-suited for environments relying on SYSLOG and SNMP traps, especially in NOC and infrastructure teams.

- Faster incident response: Automation and proactive handling help shorten outages and reduce mean time to resolution.

- Standards-based approach: Built around ITIL v3 best practices, aligning well with mature service management processes.

Cons:

- Limited compliance reporting: Not designed for audit-heavy or regulatory-driven log retention use cases.

- Network-centric scope: Less effective for application-heavy, cloud-native, or developer-focused logging needs.

opEvents uses a node-based licensing model. You pay based on the number of monitored nodes. Both perpetual (one-time purchase) and subscription (annual) licenses are available.

When you install opEvents (typically with NMIS), you can activate a time-unlimited free license for up to 20 nodes, which is useful for small environments or evaluation. FirstWave does not publish fixed price sheets online. You’ll need to contact sales to get a quote.

5. ManageEngine Log360 (FREE TRIAL)

Best For: Organizations that need centralized log collection, real‑time security monitoring, and compliance reporting

Price: Starts at $120 per year

ManageEngine Log360 is a unified SIEM platform that centralizes log collection, analysis, threat detection, and automated response. Log360 collects and correlates logs from on-premises, cloud, and hybrid environments. It then uses it to provide clear visibility across endpoints, servers, network devices, applications, and cloud platforms.

In contrast to ManageEngine EventLog Analyzer, Log360 is a broader security analytics platform. Log360 includes EventLog Analyzer as a core component, but extends it with UEBA, Active Directory auditing, file integrity monitoring, CASB, and threat intelligence. It is meant for organizations that need centralized visibility across identity, logs, and user behavior, not just log analysis.

The software is available both as a traditional on‑premises SIEM solution that you install and run within your own infrastructure and as a cloud‑based SIEM offering called Log360 Cloud.

ManageEngine Log360 Key Features:

- Centralized Log Collection: Gathers logs from servers, endpoints, network devices, firewalls, applications, and cloud sources into a single platform for unified visibility.

- Real‑time Correlation and Alerting: Correlates events across logs in real time and triggers alerts for suspicious activities, policy violations, or defined thresholds.

- User and Entity Behavior Analytics (UEBA): Detects unusual user behavior indicative of insider threats, compromised credentials, or lateral movement.

- Forensic Investigation Support: Stores historical logs in tamper‑proof repositories and enables detailed search and filtering for post‑incident analysis.

- Threat Intelligence Integration: Enhances alert accuracy by correlating log events with known threat feeds and patterns.

- Automated Incident Workflows: Supports automated responses, ticketing integration, and workflow triggers to accelerate investigation and remediation.

- Log archival and retention: Manages long‑term log storage with configurable retention policies that satisfy audit and compliance requirements.

Unique Buying Proposition

Log360’s biggest advantage as a log management tool is its centralized, real‑time log correlation, built-in security analytics, and compliance reporting, packaged in a single, easy‑to‑deploy platform. Log360 links log events to user behavior (UEBA), threat intelligence, and predefined compliance frameworks for better actionable security insights and audit‑ready evidence.

If you operate a SOC, Log360 provides the tools you need to stay ahead of cyber threats efficiently and confidently. By “tools,” we mean clear visibility across your environment, accurate threat detection, and automated response capabilities in one place.

Feature-In-Focus: Real-time Log Correlation and Security Analytics

Real‑time log correlation and security analytics turn raw network log data into actionable security insights. This includes linking events across systems, detecting anomalies, and surfacing suspicious behavior to support monitoring, threat detection, and compliance reporting.

Why do we recommend ManageEngine Log360?

We recommend ManageEngine Log360 because it integrates real-time threat detection, UEBA, SOAR, compliance reporting, and AI-powered investigation. Its ability to correlate events across your endpoints, networks, applications, and cloud services, coupled with automated incident response, provides SOCs and security teams with a clear, real-time view of threats.

Who is ManageEngine Log360 recommended for?

Log360 is aimed at mid‑sized to large enterprises that need centralized log collection, real‑time security monitoring, and compliance reporting. It is also suitable for security organizations looking for a platform that integrates log analytics, threat intelligence, and automated workflows.

Pros:

- Centralized Visibility: Unifies security visibility across on-premises, cloud, and hybrid environments.

- Reduced Alert Fatigue: Uses intelligent correlation and behavior-based detection to surface meaningful alerts.

- Faster Incident Response: Accelerates response through built-in SOAR and automation.

Cons:

- Limited Strategic Risk Focus: More focused on detection and response than on strategic cyber risk quantification.

ManageEngine Log360 offers flexible deployment and licensing options. Cloud plans start with a free tier that provides 50 GB of search storage and 150 GB of archival storage. Paid cloud subscriptions include tiers such as the Basic plan ($120 per year), the Standard plan ($540 per year), and the Professional plan ($840 per year).

Each has the same base storage, but increasing retention, alert profiles, and correlation rules are included. A full 30‑day free trial of the broader Log360 SIEM solution is also available.

6. Site24x7 Log Management (FREE TRIAL)

Best For: IT operations and security teams in organizations that run cloud, hybrid, or distributed systems

Price: Professional plan starts at $42/month

Site24x7 Log Management is a cloud-native, SaaS log platform focused on high-scale log ingestion, real-time analytics, and multi-source observability across cloud, hybrid, and on-prem systems. Site24x7 is owned by Zoho Corporation, a privately held technology company that also operates the ManageEngine IT management suite.

The log management platform is part of the broader Site24x7 observability suite, which also includes infrastructure, network, application, and synthetic monitoring. Although Site24x7 Log Management and ManageEngine’s log-centric products (Log360 and EventLog Analyzer) all deal with machine data, they are built for different use cases and environments, and each brings distinct strengths to the table.

Site24x7 Log Management brings cloud-scale, observability-centric log analytics that emphasize performance context, integrated metrics/traces, and ease of onboarding. ManageEngine Log360 and EventLog Analyzer, on the other hand, excel at security-focused log auditing, compliance reporting, and detailed user/event forensics. Your choice should depend on whether you need broad operational observability (Site24x7) or a deep security audit and compliance focus (Log360/EventLog Analyzer).

Site24x7 Log Management Key Features:

- Centralized Log Monitoring: Collects and analyzes logs from applications, servers, and infrastructure in a single console.

- Automatic Log Discovery: Detects log sources across your environment without manual setup.

- Custom Dashboards: Correlates log metrics and events using configurable visual dashboards.

- Advanced Search and Filtering: Enables detailed analysis of log data through flexible queries.

- Alerts and Notifications: Triggers alerts for critical events and anomalies in real time.

- Third-Party Integrations: Connects with ITSM and other external tools for unified incident handling.

- Reporting and Exports: Generates custom reports and allows log data export for audits or deeper analysis.

- Multi-Cloud Support: Manages logs across AWS, Azure, and Google Cloud Platform.

Unique Buying Proposition

The unique buying proposition of Site24x7 Log Management is that it delivers cloud-native, scalable log collection and real-time analytics. It comes fully integrated with infrastructure, application, and network observability. Site 24×7’s approach is built for dynamic, distributed environments (cloud, hybrid, containers). The dynamic, distributed environment is important because cloud, hybrid, and container environments change quickly, and log management must keep pace.

Feature-In-Focus: Log collection and real-time analysis

Site24x7 automatically discovers and ingests logs from infrastructure, applications, and cloud services. It also provides flexible search and filtering through an intuitive query language, and correlates log metrics on customizable dashboards. Its real-time alerts and actionable insights help teams resolve performance issues and operational problems faster.

Why do we recommend Site24x7 Log Management?

We recommend Site24x7 Log Management for its simplicity and convenience. It operates entirely in the cloud, which makes it easy to set up and use. You can access and manage your logs from anywhere.

Our assessment shows that it automatically discovers logs and provides real-time visibility through dashboards and alerts. The software also integrates tightly with infrastructure, application, and cloud monitoring in the broader Site24x7 platform.

Who is Site24x7 Log Management recommended for?

We recommend Site24x7 Log Management for IT operations, DevOps, and small to mid‑sized security teams in organizations that run cloud, hybrid, or distributed systems and need centralized visibility into logs and related telemetry.

Pros:

- Cloud-native architecture: Works effectively across distributed and multi-cloud environments.

- Unified console: Reduces tool sprawl by centralizing log monitoring and troubleshooting.

- Built-in automation: Speeds up response to recurring operational issues.

- Platform integration: Fits naturally into broader Site24x7 monitoring and IT operations workflows.

Cons:

- Ecosystem dependency: Delivers the strongest value when used alongside other Site24x7 modules.

Site24x7 Log Management is offered as a cloud‑native log management service that you can add to Site24x7’s broader monitoring platform. Log pricing is not a standalone fixed plan. It is offered as an add-on tier. The log tiers can be attached to your Site24x7 account in addition to your base monitoring plan.

Pricing is based on log ingestion volume and retention period. The Professional tier is the most popular and includes 4GB log ingestion at $42/month paid annually. The Enterprise tier starts at $625/month (paid annually) and includes all features available in the Professional plan, as well as anomaly detection, event correlation, and other advanced features. You can get started with a 30‑day free trial.

7. Splunk

Best For: Big companies, government agencies, and regulated industries

Price: Not publicly listed on their website

Splunk is a log management platform that you can use to index, search, and analyze machine-generated data like logs, configurations, and events via a web-style interface. It powers real-time visualization, alerts, dashboards, and reports. Splunk’s architecture uses lightweight agents or APIs to gather data, index it, and serve it via flexible queries. It spans log management, observability, SIEM, SOAR, and analytics. Splunk supports both on-premises and cloud deployments. Enterprises can choose Splunk Enterprise or its managed alternative, Splunk Cloud.

In the past year, Splunk earned multiple high-profile recognitions, reinforcing its industry standing. It was named a Leader in the 2025 Gartner Magic Quadrant for Observability Platforms for the third consecutive year and remains the only vendor recognized simultaneously in both the SIEM and Observability quadrants. It also earned Leader status in the Forrester Wave: Security Analytics Platforms (Q2 2025) with top scores across analytics, detection engineering, compliance reporting, automation, and more. That level of recognition, deployment flexibility, feature breadth, and strong market presence mark why Splunk stands among the top log management tools.

In March 2024, Cisco completed its acquisition of Splunk. Splunk’s strengths in data analytics, security, and observability will complement Cisco’s network and AI ambitions. The acquisition is expected to accelerate product innovation, deepen security capabilities, and drive a unified platform across infrastructure and data intelligence.

In a nutshell, Splunk remains one of the most capable and widely recognized log management platforms in the market. The AI-native enhancements introduced over the past few years have strengthened its capabilities. But these capabilities come with one of the highest price tags in the category, which can escalate quickly as log volumes grow.

Splunk Key Features:

- Real-time data ingestion and search: Collects and indexes data from any source at scale, and enables instant queries with its Search Processing Language (SPL).

- Advanced analytics and dashboards: Provides customizable dashboards, machine learning models, and anomaly detection for deep insights.

- Security and compliance tools: Integrated SIEM and SOAR capabilities help detect threats, automate responses, and generate compliance-ready reports.

- Flexible deployment: Available as Splunk Enterprise (on-premises) or Splunk Cloud (SaaS), depending on compliance and infrastructure needs.

- Extensive integrations: Supports hundreds of third-party apps and add-ons for IT, DevOps, and security ecosystems.

Unique Buying Proposition

Splunk’s unique selling point is its ability to ingest, index, and analyze massive volumes of machine data in real time, at enterprise scale, across both security and observability use cases. Splunk’s powerful search processing language (SPL) and rich ecosystem of apps and integrations further differentiate it from other log management tools.

Feature-In-Focus: Real-time Log Collection, Indexing, and Analysis

Splunk excels at ingesting machine data from servers, applications, networks, and cloud services. It makes this data instantly searchable and actionable. The platform also provides dashboards, alerts, and analytics to help you monitor performance, detect anomalies, and support security and compliance audits.

Why do we recommend Splunk?

We recommend Splunk because it meets the key criteria organizations should use when evaluating log management tools. It supports both cloud and on-premises deployment, scales to handle massive log volumes, and offers enterprise-grade security with encryption and role-based access controls.

Cisco’s acquisition of Splunk further strengthens its long-term viability, adding global reach, financial backing, and tighter integration with networking and AI-driven security capabilities.

Who is Splunk recommended for?

Splunk is best suited for large enterprises, government agencies, and regulated industries that require scale, a strong security posture, and compliance-ready reporting. Although its cost may be high for smaller teams, Splunk offers an unmatched breadth of features and robust capabilities for businesses where system outages, security breaches, or compliance issues could cause serious problems.

Pros:

- AI-native analytics: Delivers real-time insights across systems using built-in AI capabilities.

- Cost control: Supports full machine data lifecycle management to help manage and optimize costs.

- Built-in threat intelligence: Enriches alerts and accelerates detection and response.

- Natural language search: Allows faster investigation and troubleshooting without complex queries.

- Broad deployment support: Integrates seamlessly with AWS, Azure, GCP, private cloud, and on-premises environments.

- Unified data visibility: Works with logs, metrics, traces, events, and more in a single platform.

Cons:

- Cost at scale: Can become expensive for environments with very high data volumes.

- On-premises overhead: On-prem deployments demand significant setup and ongoing maintenance.

- Feature complexity: Advanced AI features may exceed the needs of smaller teams or organizations.

Splunk Log Management is part of the Splunk Platform and is available as a cloud-hosted service (Splunk Cloud Platform) and as an on-premises or private-cloud deployment. Pricing is quote-based and depends on your selected pricing model, which can include ingest-based, workload-based, entity-based, or activity-based pricing, depending on how you collect and use data.

The platform offers a free trial that allows you to evaluate log ingestion, search, and analytics capabilities before you commit. Paid plans are typically billed annually and scale based on data volume, workload type, or the number of your monitored entities.

8. Elastic Stack

Best For: Organizations with skilled IT teams that require observability and security on a single platform

Price: Not publicly available on their website

Elastic Stack The Elastic Stack, commonly referred to as the ELK Stack, combines Elasticsearch, Logstash, and Kibana into a powerful platform for ingesting, indexing, searching, and visualizing logs. It is open source, highly scalable, and flexible.

You can deploy it on-premises, in private clouds, or use Elastic’s managed cloud service, Elastic Cloud. Its interoperability with OpenTelemetry, support for diverse log sources, and robust pipeline management (via Kibana and Fleet) make it appealing for organizations aiming to centralize observability and analyze cross-domain machine data efficiently.

This stack is highly regarded in enterprise observability and security. Elastic was named a Leader in the 2025 Gartner Magic Quadrant for Observability Platforms. It also earned Leadership status in the Forrester Wave: Security Analytics Platforms (Q2 2025), praised for its AI-driven detection, open detection logic, and easy data ingestion. Its recognition across major analyst reports indicates solid enterprise adoption.

Elastic Stack has earned its place among the top log management solutions due to its flexibility, scalability, and strong open-source roots. However, it is not as easy to use as some plug-and-play log management tools, which can make it challenging for smaller teams with limited staff. It is the better choice if your team has the expertise to manage and customize the platform effectively.

Elastic Stack Key Features:

- Elasticsearch: A distributed, JSON-based search and analytics engine built for speed and scale.

- Kibana: A powerful visualization layer that turns raw log data into interactive dashboards, time-series analysis, and real-time reporting, all within a single user interface.

- Integrations: Out-of-the-box support for data ingestion through Elastic Agent, Beats, and web crawlers, with the ability to collect logs from applications, infrastructure, and external sources.

- Machine Learning & Security: Native Elastic features for anomaly detection, predictive analytics, and security monitoring, built to extend beyond basic log management.

- Reporting: Tools to generate and share insights directly from Kibana dashboards.

Unique Buying Proposition

Elastic Stack’s unique selling point is its open, flexible architecture that lets you collect, search, and visualize data from virtually any source at scale. Its seamless integration with OpenTelemetry, AI-driven analytics, and ability to unify observability and security data on a single platform make it attractive to organizations that want customization without vendor lock-in.

Feature-In-Focus: Centralized Log Ingestion, Search, and Analysis

Elastic Stack’s centralized log ingestion, search, and analysis refers to its ability to collect logs from multiple sources and store and index them in a central repository. It then makes these logs instantly searchable and analyzable through Kibana dashboards, queries, and visualizations. You can use it to quickly troubleshoot issues, spot unusual activity, and keep a clear, searchable history for audits and compliance.

Why do we recommend Elastic Stack?

We recommend the Elastic Stack for its features, deployment flexibility, cost, scalability, and integration capabilities. Elastic provides features such as real-time search, AI-driven analytics, threat detection, observability, and compliance reporting.

On deployment flexibility, you can deploy it on-premises for full control or use Elastic Cloud as a managed service. Its open-source base keeps costs lower than many enterprise-only tools, and Elasticsearch scales easily to handle massive log volumes. It also integrates smoothly with OpenTelemetry, SDKs, AWS, Azure, GCP, and many business apps.

These strengths make it one of the most complete and adaptable log management solutions available to enterprises today.

Who is Elastic Stack recommended for?

The Elastic Stack is best suited for organizations with skilled IT or DevOps teams that require both observability and security on a single platform.

If your team values customization, open standards, and the ability to control costs, the Elastic Stack is one of the most strategic choices for log management.

Pros:

- Open-source foundation: Provides flexibility and a low-cost entry point.

- High scalability: Designed to handle large, distributed log data volumes.

- Strong visualization: Offers customizable and prebuilt dashboards for clear insights.

- Broad integrations: Supports fast data ingestion from many different sources.

- Built-in advanced analytics: Includes native machine learning and anomaly detection features.

Cons:

- Learning curve: More complex to learn than simpler, turnkey log management tools.

- Operational complexity: Resource-heavy deployments require skilled staff to manage effectively.

- Rising costs at scale: Expenses can increase when moving to enterprise or cloud versions.

- Manual setup effort: Requires more configuration and tuning than all-in-one commercial platforms.

Elastic Stack offers flexible pricing and deployment options depending on how you want to use it. You can start with a free 14‑day trial of Elastic Cloud to deploy Elasticsearch, Kibana, and related features on AWS, Azure, or Google Cloud.

The core Elastic Stack components (Elasticsearch, Kibana, Logstash, and Beats) are open source and available free for self‑managed deployment. Paid cloud or managed service plans pricing varies based on the resources you use (such as node size, data volume, and cluster configuration).

Cloud plans and self‑managed subscriptions both support a broad range of use cases from logging and monitoring to security and observability. However, exact costs depend on your deployment size and chosen features, and you must contact Elastic or use their pricing calculator for detailed quotes.

9. Datadog

Best For: Cloud-based organizations, DevOps teams, and large companies with distributed systems

Price: Log ingestion starts at $0.10 per GB

Datadog Log Management is a cloud-based SaaS solution that lets you collect, search, analyze, and monitor log data at scale. It’s part of the broader Datadog observability platform that correlates logs with metrics, traces, and security signals in one place.

Datadog approaches log management differently than most. Instead of forcing you to choose upfront which logs to index (and risk losing valuable data), it decouples log ingestion from indexing. They call this “Logging without Limits.” In practice, that means you can capture everything without fear of blowing up storage costs, then decide later which data is worth indexing, archiving, or dropping entirely.

Datadog was named a Leader in the Forrester Wave: AIOps Platforms, Q2 2025, earning top scores across several capabilities, including log management, data governance, and cloud infrastructure. It was also positioned as a Leader in the 2025 Gartner Magic Quadrant for Observability Platforms, which underscores its strength across observability, including log management.

Indeed, Datadog has built a reputation as one of the most robust and reliable players in observability and log management. This credibility is important because you need a platform you can rely on for critical logs. Datadog has proven exactly that, rolling out new features at an impressive pace, and maintaining strong customer support and global infrastructure.

Datadog Key Features:

- Logging without Limits: Separates log ingestion from indexing, so you can ingest all logs cost-effectively, choose what to index, and archive the rest.

- Log Rehydration: Recall archived logs on demand for fast historical analysis, ideal for audits and compliance.

- Log-Based Custom Metrics: Convert high-volume log patterns into metrics at ingestion, then retain them longer and analyze them more efficiently.

- Online Archives & Flex Logs: Search logs for up to 15 months and store massive log volumes affordably with tiered retention.

- Seamless Correlation: Easily jump between logs, traces, metrics, and security signals-all in one unified observability platform.

- Log Processing Pipelines: Normalize, parse, mask, or transform logs as they flow through the ingestion pipeline.

Unique Buying Proposition

Datadog’s unique buying proposition is its “Logging without Limits” approach. It enables you to ingest everything, archive it cheaply, and decide later what to index and analyze.

That flexibility is especially valuable in modern cloud environments where you don’t always know which logs will matter until something breaks or a security event happens. It’s a clear differentiator from tools, where filtering and storage decisions are usually made early and can create blind spots.

Feature-In-Focus: Centralized Log Ingestion, Real-time Indexing, and Advanced Analytics

The key features of Datadog as a log management tool are centralized log ingestion, real-time indexing, and advanced analytics. These capabilities allow you to collect, search, visualize, and correlate logs from across your infrastructure and applications.

And what value do you derive from this feature? The answer is simple: fast troubleshooting, anomaly detection, performance monitoring, and audit-ready reporting.

Why do we recommend Datadog?

We recommend Datadog because of its ability to handle massive log volumes. Its tiered log storage and selective indexing enable you to retain full log data for audit and investigation purposes. Its strength is not just aggregation, but the way it correlates logs with real-time infrastructure context and service behavior.

We also appreciate that it is SaaS-based and offers hundreds of built-in integrations across AWS, Azure, GCP, Kubernetes, databases, and business applications. You can start seeing value right away with minimal setup effort thanks to its predefined deployment, preconfigured settings, and best-practice assumptions about how logs should be collected, parsed, and visualized.

Who is Datadog recommended for?

Datadog Log Management is best suited for cloud-first organizations, DevOps teams, and enterprises running distributed systems that need unified observability across logs, metrics, and traces.

It is less suited to SMBs with very simple environments or teams that need strict on-premises deployments due to compliance or data-residency rules. Since it’s SaaS-only, Datadog may not be the right fit if you want complete control over your hosting and storage infrastructure.

Pros:

- Seamless integration: Works smoothly with Datadog’s monitoring, tracing, and security tools.

- Flexible storage and cost control: Offers tiered storage options to help you manage costs.

- Selective indexing: Enables you to store all data while indexing only what’s necessary.

- Strong dashboards and visualizations: Provide clear insights and reporting.

- Wide integrations: Connects easily with cloud and infrastructure tools.

Cons:

- Potentially high costs: Expenses can rise if indexing and storage aren’t carefully managed.

- Setup complexity: Advanced features require time and expertise to configure.

- Long-term access limitations: Retrieving older logs may be slower or more expensive.

- Archived log rehydration: Accessing archived logs adds extra steps.

- SaaS-only deployment: No on-prem option for organizations with strict environment requirements.

Datadog’s log management is offered as part of its cloud‑native observability platform. Log costs are usage‑based. You pay for log ingestion and indexed log retention. There are also flex storage options for lower‑cost long‑term retention.

Log ingestion costs about $0.10 per GB (billed annually), and indexed log retention costs about $1.27 per 1M indexed logs per month for 7-day retention (billed annually). Monthly and on-demand billing may come at higher rates. There is a 14-day free trial of the full platform with no credit card required.

10. Sumo Logic

Best For: Cloud-first companies, SaaS providers, digital businesses, and enterprises with distributed systems

Price: Not publicly listed on their website

Sumo Logic is a cloud-native log management and analytics platform. It collects, stores, and analyzes log and machine data from applications, infrastructure, and security systems. Its core strengths are real-time monitoring, powerful search, built-in dashboards, and security analytics.

Sumo Logic is delivered as a SaaS platform, so it scales automatically and reduces infrastructure overhead. It’s used for troubleshooting, detecting security threats, ensuring compliance, and improving application performance. The target users are enterprises and digital-first businesses that prefer a managed, cloud-based solution over running their own log management infrastructure.

Based on our assessment, Sumo Logic is a solid log management tool, but it may not be the right fit for everyone. For instance, because it is cloud-only, it may not work for organizations that require on-premises setups for regulatory or data residency reasons. If you need deep customization or on-premises deployment, a different tool may be a better fit.

Sumo Logic Key Features:

- Cloud-native Platform: Because it’s built natively in the cloud, Sumo Logic eliminates the need for heavy infrastructure and gives you immediate, scalable access to log data as it’s generated.

- Built-in Machine Learning: Sumo Logic automatically learns patterns in your data, flags unusual activity, and sends alerts so your team can respond to minor issues before they become big problems.

- Security Analytics and Compliance Dashboards: It comes with ready-made dashboards and reports for compliance and threat detection.

- High-volume Log Ingestion: You can pull in logs from virtually anywhere-servers, applications, and cloud platforms.

- Intuitive UI: Its user interface makes it easy to visualize data, run searches, and build automated alerts so that you can gain actionable insights without deep technical expertise.

Unique Buying Proposition

Sumo Logic’s biggest strength is its cloud-native design. It was built from the ground up as a SaaS platform. This gives it a clear edge: elastic scaling without hardware constraints, automatic updates, and no servers to maintain. In practice, this implies you can handle terabytes of logs per day without re-architecting your environment or worrying about capacity planning.

From analyst reports and case studies, it is clear that organizations choose Sumo Logic when they need speed and simplicity at scale. So, if you want a platform that scales easily and reduces operational workload, Sumo Logic is hard to beat.

Feature-In-Focus: Log Collection and Real-time Analysis

The cloud-native, centralized log collection and real-time analytics feature turn raw machine data into actionable insights for monitoring, troubleshooting, and security. Sumo Logic collects logs from across your cloud, hybrid, and on-premises environments and consolidates them into a unified platform. It uses scalable indexing and machine learning to spot patterns and anomalies in your data.

Why do we recommend Sumo Logic?

We recommend Sumo Logic as one of the best log management tools because it consistently addresses the real-world pain points organizations face when managing logs at scale.

Furthermore, Sumo Logic goes beyond just collecting logs. It also includes built-in security analytics, compliance dashboards, and machine learning to spot unusual activity. We’ve seen this reflected in analyst evaluations and user feedback, where users and organizations highlight faster troubleshooting, smoother audits, and improved threat detection as measurable outcomes.

Sumo Logic received the 2025 Data Breakthrough Award for “Best Log Analytics Solution”. It was also named Best AI/ML Data Analytics Security Solution at the 2025 SC Awards.

Who is Sumo Logic recommended for?

Sumo Logic is best for cloud-first organizations, SaaS companies, digital businesses, and enterprises with distributed systems that generate large amounts of data.

Since it is fully cloud-native, it works best when your applications, infrastructure, and workflows are already in the cloud, or when you want to offload log management to a managed service.

Pros:

- Fast, scalable ingestion: Supports high-volume log ingestion with real-time search across applications.

- Powerful search and APIs: Offers basic to advanced filtering through robust queries and REST APIs.

- Strong security and analytics: Uses machine learning and security analytics for effective troubleshooting and incident detection.

- Rich dashboards and visuals: Helps teams monitor systems and respond proactively.

- Cloud-native scalability: Runs as a SaaS platform with no infrastructure to manage.

Cons:

- Usage-based pricing: Costs can rise quickly with large data volumes or long retention needs.

- Learning curve: Advanced features and query syntax can be challenging for new users.

- Performance limits: Large datasets or long-range queries may cause performance slowdowns.

- Sumo Logic plans are organized into two main tiers: Essentials and Enterprise Suite. Essentials plan targets small to mid-sized DevOps and SecOps teams and focuses on core log management, AI-driven alerting, and troubleshooting. You can sign up for a 30-day free trial.

The Enterprise Suite is designed for larger or more mature security teams and adds cloud-native SIEM, advanced threat detection, and 24/7 support. Sumo Logic also offers a Flex (credit-based) pricing model that allows unlimited log ingestion with predictable costs based on the data scanned and retained. All plans are delivered as a cloud-native SaaS and are typically billed annually.

11. Logz.io

Best For: Cloud-first or hybrid organizations that generate large amounts of log data

Price: Open 360 logging ingestion starts at $0.10/ GB of log data ingested

Logz.io is a cloud-native log management and observability platform built on open-source technologies (Elasticsearch, OpenSearch, and Kibana). Beyond just collecting and storing logs, Logz.io layers in AI-powered analytics, anomaly detection, cost-optimization tools, and multi-tier storage so you can troubleshoot faster, reduce noise, and keep log costs under control.

Logz.io sits in an interesting middle ground in the log management space based on how it is architected and used in production environments. Logz.io Open 360 cloud platform brings together logs, metrics, and traces in one place. It is a strong fit for cloud-first or hybrid organizations that need fast onboarding, elastic scale, and tight cost control.

Its managed OpenSearch foundation, Data Hub tiering, LogMetrics, and AI-driven anomaly detection directly addresses common operational pain points we see organizations struggle with in real audits and incident response.

Logz.io Key Features:

- AI-Powered Insights: Gain deeper insight into logs with anomaly detection and automated root cause analysis built in.

- Lightning-Fast Search: Queries run up to 4-5x faster than OpenSearch Dashboards, which saves you time when you’re deep in troubleshooting.

- Data Optimization Hub: Filter out noise and cut 30-50% off your data costs without losing control of what’s being ingested.

- Multi-Tier Storage: Store hot data for real-time analysis, warm data for less frequent access, and cold data for long-term retention.

- Seamless Integrations: 300+ integrations out of the box, plus log-trace correlation, which means you can expand into observability whenever you’re ready.

Unique Buying Proposition

Logz.io’s biggest advantage is that it’s fully managed and delivered as a SaaS on AWS. You don’t have to deal with the headaches of scaling clusters, patching systems, or keeping up with the moving parts of open-source licensing changes.

Furthermore, Logz.io helps cut Mean Time to Response (MTTR) in two big ways. First, its queries run 4-5x faster than open-source tools, so you can get answers quickly when systems go down. Second, it tackles the problem of noisy, irrelevant data with its AI-powered Data Optimization Hub, which filters out the clutter so critical insights stand out. Together, these capabilities make it easier to find and fix issues faster.

Feature-In-Focus: Log Ingestion and Real-time Analytics

Logz.io centralizes logs across distributed environments and uses machine learning and anomaly detection to surface meaningful patterns. It also provides dashboards and alerts that help you troubleshoot faster, spot issues early, and maintain visibility.

Why do we recommend Logz.io?

If you’ve ever had a “why are we paying this much just to store logs?” moment in a budget review (and I’ve seen this firsthand with teams), this tool feels like an answer. It helps you analyze faster; cut the fat out of your log data, which directly translates to savings.

Who is Logz.io recommended for?

Logz.io is best for cloud-first or hybrid organizations that generate large amounts of log data but can’t afford runaway costs. CISOs, IT leaders, and DevOps teams will appreciate its AI-driven insights.

Regulated industries will also like the flexible storage and retention for compliance. But if you’re a small team with few logs, it might feel like more tools than you really need.

Pros:

- AI-driven insights: Uses anomaly detection and root cause analysis to reduce manual investigation time.

- High-performance search: Delivers noticeably faster search than many traditional open-source dashboards.

- Cost optimization tools: Features like Data Hub and LogMetrics help control and reduce unnecessary log spend.

- Fully managed SaaS: Eliminates the operational burden of scaling and maintaining infrastructure.

Cons:

- Overkill for small teams: May offer more functionality than startups or small teams require.

- Active cost management required: Filters and data tiers still need careful tuning to avoid rising costs.

- Cloud-first design: Less suitable for strict on-premises-only environments.

- Learning curve: Advanced features can take time to fully understand and use effectively.

Logz is purpose-built for monitoring cloud-based microservices, and is available directly through the AWS Marketplace. Its pricing model is consumption-based (usage-based). You pay for what you send into the platform and how long you choose to retain or index it. Under this model, costs are tied to actual data volumes (GB per day for logs, metrics, and traces) and retention tiers, with options to set budgets and ingestion caps to help control spending.

You can negotiate plans or use subscription-style pricing that pre-commits a certain level of usage, and overages (data beyond your plan) are charged at a higher “On Demand” rate if configured. Logz.io’s consumption-based pricing for Open 360 starts at $0.10 per GB of log data ingested before filtering and indexing, with a minimum of 10 units. A free 14-day trial is available so you can test the platform before committing.

DIY log archiving

You can write your own copy of Cronolog as a script for Unix or Unix-like operating systems such as Linux and Mac OS. Although there are plenty of clever things you can do with regular expressions and pattern matching to pick out records for a specific date, the easiest way to get log archives per day is to write a copy script and then schedule it to run at midnight. If the last instructions in the script remove the existing file, new records will accumulate in a separate file throughout the day, to be archived off again at midnight.

DATE=`date +%Y%m%d` MV=/usr/bin/mv LOGDIR=/opt/apache/logs LOGARCH=/www/logs FILES=”access_log error_log” CP=/usr/bin/cp for f in $FILES do $CP $LOGDIR/$f $LOGARCH/$f.$DATE.log $MV $LOGDIR/$f $LOGDIR/$f.$DATE.saved done cat /dev/null > /opt/apache/logs/access_log |

Replace Cronolog

Don’t get stressed that cronolog.org is no longer operating or that none of the download sites that used to deliver Cronolog no longer list it. Cronolog was not that great, and you could quite easily write your own version in just a couple of minutes.

Log management utilities are very useful and despite the limited capabilities of Cronolog, many systems administrators came to rely on its services. As you can see from this review, many other log management tools & analysis software, not only give you the ability to parse your log files by date, but also give you some amazing data visualization and analysis features. Our Editor’s choice is an excellent example of this – ManageEngine EventLog Analyzer.

Every one of the recommendations in our list of Cronolog replacements can be used or tried for free. All of these facilities give you better service than the do-it-yourself replication of Cronolog. Try out any of these tools and see which of them gives you the extra features needed to improve log and facilities management.

Our Methodology for Choosing the Best Log Management Tools

We evaluated tools across several key areas to ensure they provide comprehensive log management and actionable insights for your organization.

1. Focusing on Your Needs

We looked for tools that meet what CISOs, IT directors, and operations teams really need. This includes factors such as flexible deployment, scalability, clear pricing, and strong compliance and security features.

2. Scalable and Flexible

We gave preference solutions that can handle large log volumes reliably and adapt to your cloud-native or hybrid environments.

3. Delivering Real Value

We chose vendors that also provide real-time insights, security analytics, and compliance support, in addition to log collection.

4. Avoiding Niche Limitations

We skipped tools that are too specialized or lightweight to cover your broader enterprise requirements.

5. Practical Open-Source Considerations

We excluded open-source options that need heavy in-house expertise or underdeveloped ecosystems, which most teams can’t support effectively.

Broader B2B Software Selection Methodology

We evaluate B2B software using a consistent, objective framework that focuses on how well a product solves meaningful business problems at a justified cost. This includes assessing overall performance, scalability, stability, and user experience quality. We examine real-world feedback from practitioners to understand how the software behaves in non-controlled demos.

We also review vendor transparency, roadmap clarity, support responsiveness, and the pace at which meaningful improvements are released. We follow this approach to ensure each of our recommendations is grounded in practical value, long-term viability, and operational impact, not in marketing claims.

Check out our detailed B2B software methodology page to learn more.

Why Trust Us?

Our work is produced by a team of IT and business software professionals with extensive hands-on experience evaluating, deploying, and managing enterprise technology. We analyze software independently, using evidence-based methods and industry best practices to ensure our assessments remain unbiased and technically sound.

Our goal is to provide you with clear, reliable insights that help reduce risk, shorten evaluation cycles, and support confident decision-making when selecting complex business technology.

Log Management FAQs

What is log aggregation?

Log aggregation combines log files from different sources so that they can be unified for analysis. Different logging systems deploy individual file formats, so log aggregators need to convert log file contents into a unified format. Once all files have the same record layout, they can be submitted together to analytical tools for sorting, searching, filtering, and summarizing.

How do I collect application logs?

One of the main sources of application logs is the Windows Event system. These are very easy to collect in Windows environments.

- Get to the Control Panel.

- Select System and Security.

- In the System and Security folder look for Administrative Tools and click on the View event logs link.

- In the left tree menu of the Event Viewer, expand Windows Logs.

- Click on Application.

- In the Actions menu in the right-hand side panel, click on Save All Events As.

- In the popup file browser select a folder for the log file.

- Give the log file a name. It will be given the .evtx extension. Press Save.

- In the display Information popup, click OK.

What is centralized log management?

Log files and event messages get generated by most applications and operating systems but most people ignore them. You can get a lot of information about the operations of your IT infrastructure if you pay attention to these messages and if you want security standard accreditation, you need to have a comprehensive log management policy. Centralized log management requires you to collect all log files and store them in one place. Many businesses use cloud storage for this activity. Aggregating logs for analysis is also a good idea.

How do you manage logging in the enterprise?