What is QoS in networking?

Quality of Service (QoS) in networking refers to a range of techniques used to manage how data flows across a network so that important traffic is prioritised and performance remains consistent. It focuses on ensuring that critical applications receive the bandwidth and reliability they need by controlling how network resources are allocated.

QoS works by categorising network traffic into different classes and assigning priority levels to each class. This enables the network to allocate resources more efficiently, ensuring that high-priority data packets, such as voice and video streams, are transmitted first, while less critical traffic, such as email or file downloads, is given lower priority. QoS helps manage network congestion, reducing packet loss and latency by controlling the flow of data, especially during peak usage times.

QoS is particularly important for the following types of traffic:

- Voice Traffic – Voice over IP (VoIP) calls require low latency and minimal packet loss for clear communication. QoS ensures that voice packets are prioritised to avoid choppy or dropped calls.

- Video Traffic – Video conferencing, streaming, and surveillance data require high bandwidth and low latency for smooth playback. QoS helps prioritise video traffic to avoid buffering and lag.

- Real-time Applications – Applications such as online gaming and remote desktop services demand fast, uninterrupted data delivery to ensure a seamless experience.

- Mission-Critical Data – Business applications that rely on real-time data, like financial transactions or healthcare services, require reliable, low-latency delivery.

By implementing QoS, network administrators can ensure that critical applications receive the resources they need, even in environments with high network traffic.

Understanding Network QoS

To make things a bit clearer, let us take a real-world example of a traffic jam on a highway at rush hour. All the drivers sitting in the middle of the jam have one plan – make it to their final destinations. And so, at snail’s pace, they keep moving along.

Then the sound of an ambulance’s siren alerts them to a vehicle that needs to get to its destination more urgently – and ahead of them. And so, the drivers move out of what now becomes the ambulance’s “priority queue”, and let it pass.

Similarly, when a network transports data, it too has a setup where some sort of data is treated preferably over all the others. The packets of important data need to reach their destinations much quicker than the rest of them because they are time-sensitive and will “expire” if they don’t make it on time.

Why does network QoS matter?

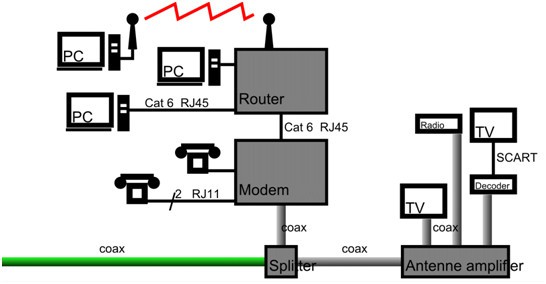

Once upon a time, a business’ network and communication networks were separate entities. The phone calls and teleconferences were usually handled by an RJ11-connected network; the calls were monitored by a PABX system. It ran separately from the RJ45-connected IP network that linked laptops, desktops, and servers. The two network types rarely crossed paths unless, for example, a computer needed a telephone line to connect to the internet. An example of such a network would look like:

When networks carried only data, speed was not so critical. Today, interactive applications that carry audio and video have to be delivered across networks at high speeds and without packet loss or variations in delivery speeds.

People now make business calls using video-conferencing applications like Skype, Zoom, and GoToMeeting, which use the IP transport protocol to send and receive video and audio messages. In the interests of speeds, these critical applications do without the transport management procedures that standard data transfers typically use.

Before we move any further into the topic of QoS, we need to talk about RTP.

What is RTP?

The Real-Time Transport Protocol or RTP is an internet protocol standard that stipulates ways for applications to manage their real-time transmissions of multimedia data. The protocol covers both unicast (one-to-one) and multicast (one-to-many) communications.

RTP is more commonly used in internet telephony communications where it handles the real-time transmissions of audiovisual data.

While RTP doesn’t in itself guarantee the delivery of the data packets – that task is handled by switches and routers – it does facilitate managing them once they arrive in the networking devices.

QoS is a hop-by-hop transport configuration implemented on the networking devices to make them identify and prioritize RTP packets. Every enabled device between the sender and recipient(s) should also be configured to understand that the packet is a “VIP” one and needs to be pushed along in the priority lane. If even one of the network devices in the relay isn’t configured right, QoS won’t work. The packets will lose their priority and slow down to that device’s data transmission speed.

What happens if we don’t use QoS in networking?

Not having a correctly configured QoS could result in one (or all) of the following issues:

- Latency: When the RTP packets haven’t been assigned their required priorities they will be delivered at the devices’ default speeds. In a congested network, the packets have to travel along with the rest of the non-urgent packets. While network latency itself won’t have an effect on the quality of the delivered audiovisual data per se, it will affect communication between end-users. At 100ms of latency, they will start talking on top of one another as the packets arrive out of sync, and at 300ms the conversation stops being comprehensible.

- Jitter: Real-time applications remove standard transport level buffering, so there is no mechanism to reassemble arriving packets in the correct order. Jitter is the irregular speed of packets on a network. It can result in packets arriving late and out of sequence. As the application does not wait for the stream to be assembled correctly, out-of-sequence packets get dropped resulting in distortion or gaps in the audio or video being delivered.

- Packet Loss: This is the worst-case scenario where we find that a number (or parts) of packets are lost due to too much congestion on the networking devices. When a switch or router’s output queue fills up, a tail drop occurs where the device discards any new incoming packets until space becomes available again.

In all the cases we have just seen, QoS can help by sorting the data out, managing the queues, and preventing data loss.

See also: The Ultimate Guide to Packet Loss

It doesn’t take much imagination to see how communication and media transfer or streaming could be badly affected when we opt out of using QoS – especially on networks that cater to RTP protocols. Even if it were perfectly designed, eventually, the communication will first become difficult, then deteriorate as the network traffic grows, and finally become impossible.

The three faults – latency, jitter, and packet loss – are, in fact, so critical in determining how well an implementation is working that QoS and network monitoring software manufacturing companies like SolarWinds use them as metrics to measure the quality of RTP-based traffic.

The best network tools for QoS monitoring

Paessler QoS Monitoring with PRTG (FREE TRIAL)

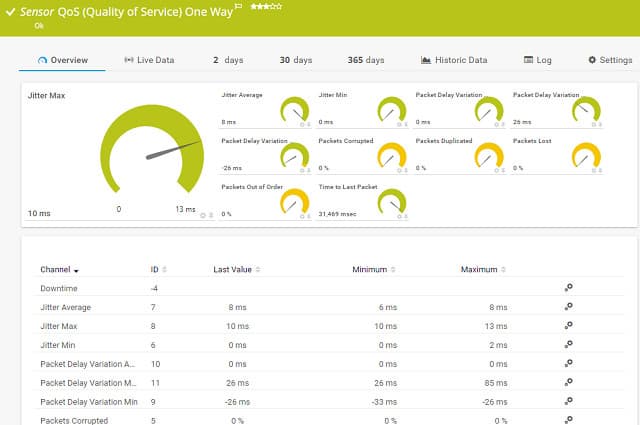

Another option that you could investigate for QoS monitoring is Paessler PRTG. This network monitoring suite has a special section that tracks QoS performance. This function shows you tagged traffic flows in real-time and it also stores data for performance analysis and capacity planning.

The PRTG software includes four tracking sensors that cover three different QoS methodologies. These are complemented by a Ping Jitter sensor that tracks the regularity of packet delivery in a stream.

The three types of QoS that PRTG can track are standard QoS, Cisco IP-SLA, and Cisco CBQoS. The trackers of standard QoS is implemented as a one-way sensor or a roundtrip sensor. These trackers can operate on connections across the internet. In order to get an accurate record of performance at the destination, you need to place a sensor at that remote location for the one-way sensor service. The roundtrip service requires a reflector at the remote location in order to function.

The Cisco IP-SLA sensor is dedicated to monitoring tagged VoIP traffic on your network. It logs a range of metrics for voice traffic including roundtrip time, latency, jitter, delays and the Mean Opinion Score (MOS).

Key Features:

- QoS, CBQoS, and IP-SLA

- VoIP traffic identification

- Mean Opinion Score

- Traffic flow mapping

Why do we recommend it?

Paessler PRTG is a large collection of monitoring tools and you decide which of them to activate. The package includes a QoS one way sensor and a QoS round trip sensor. It also has an IP SLA monitoring service and a CBQoS monitoring service. The system also provides a jitter measurement for tracking the quality of VoIP transmissions.

The Cisco CBQoS sensor follows Class-based Quality of Service implementations. CBQoS is a queuing methodology and if you want to implement it, you are going to have to keep track of more entry points to your routers and switches. You create at least three virtual queues for each device, so there is much more to monitor.

PRTG is able to set itself up and map all of your network infrastructure automatically. However, QoS implementations require decision-making, so you are going to have to set up the method yourself by deciding which types of network traffic to prioritize.

Who is it recommended for?

Any business can benefit from the PRTG QoS system because the PRTG system is free if you only activate 100 sensors. That is a great option for small businesses. Larger businesses will benefit from running general network monitoring alongside the QoS monitoring features of the PRTG specialized sensors.

Pros:

- Offers templates to quickly implement QoS and restore control over network resources

- Utilizes SNMP, NetFlow, and a variety of other protocols to create the most accurate picture of network traffic

- Offers out-of-the-box temples along with customizable sensors (great for those who tinker)

- Supports a completely free version for up to 100 sensors, making this a good choice for both small and large networks

- Pricing is based on sensor utilization, making this a flexible and scalable solution for larger networks as well as budget-conscious organizations

Cons:

- PRTG is a feature-rich platform that requires time to fully learn all of the features and options available

Paessler lets you use PRTG for free if you only activate a maximum of 100 sensors. If you go larger, you can get a 30-day free trial of the system, including the QoS monitor.

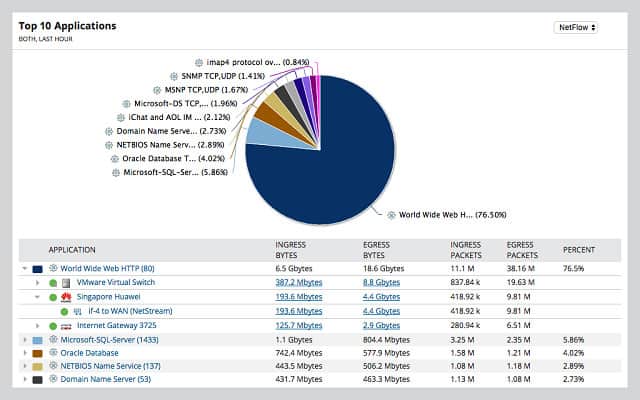

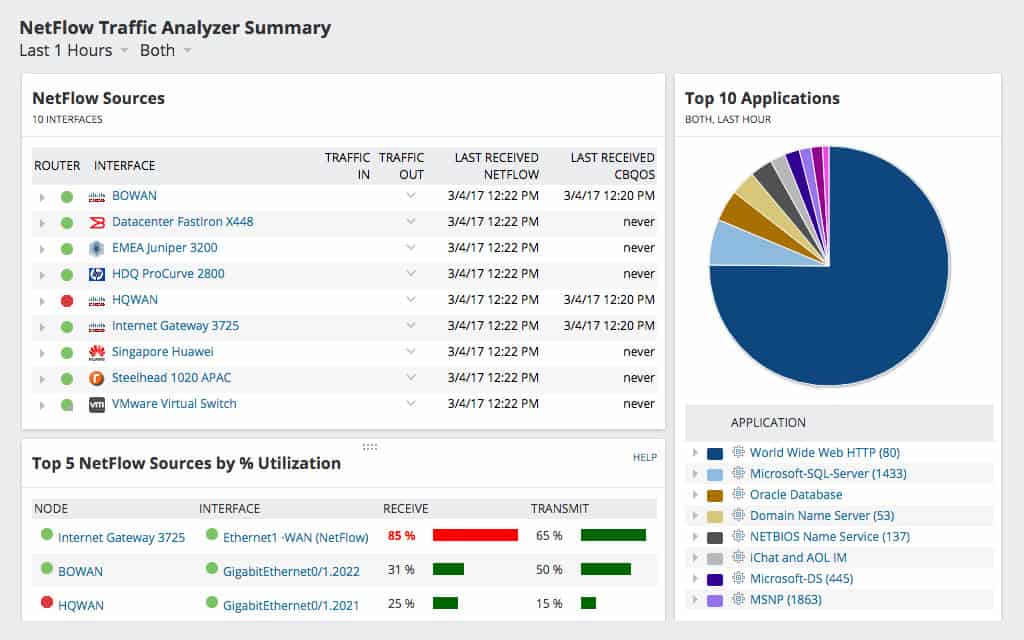

SolarWinds NetFlow Traffic Analyzer

It would be quite unfair to continue without mentioning a little bit more about one of the best network monitoring tools out there: SolarWinds NetFlow Traffic Analyzer.

Key Features:

- Spots traffic bottlenecks

- Identifies overloaded network devices

- Lists traffic per protocol

- Offers traffic shaping measures

- Alerts on capacity issues

Why do we recommend it?

The SolarWinds NetFlow Traffic Analyzer works in tandem with the SolarWinds Network Performance Monitor. This enables you to manage network devices and identify which one has become a bottleneck. QoS and CBQoS analysis is available in this package and there are also IP SLA and MOS measurements available.

This suite of network monitoring applications helps address issues that could be caused by:

- A slow network: A slow network can hold an entire business hostage as it continues to reduce the speed at which data flows. Unless the network’s bottlenecks are removed, the entire organization will experience terrible connectivity.

- Sluggish audiovisual communications: A business that can’t establish a clear communication channel within its network channel will be crippled. Even worse, not being able to communicate clearly with its clients will almost certainly bring it to its knees.

- Unmonitored networks: An administrator that can’t properly monitor the network won’t be able to know about its current status or how to make plans for its future expansion. Without documenting the network and tracking the performance of each piece of equipment, a network manager cannot make informed decisions and is likely to exacerbate network performance problems.

Armed with the NetFlow Traffic Analyzer, network administrators will be able to get rid of the problems we have just seen by:

- Helping with a QoS implementation and its optimization – through data flow feedback

- Taking stock of, and reporting on, the current QoS policy configuration, informing design decisions.

- Monitoring bandwidth usage to identify which applications and devices that hog network resources — these can be isolated, rescheduled, or shut down. See also: The best free bandwidth monitoring tools

A typical Netflow Traffic Analyzer dashboard contains the vital information an administrator needs to monitor statuses and make settings adjustments quickly. An example:

Who is it recommended for?

The combination of the Network Performance Monitor and the NetFlow Traffic Analyzer represents a big investment and this will probably be too much for small businesses. Large organizations will certainly benefit from the automated monitoring system and troubleshooting tools in this package. Mid-sized businesses get value for money from this package by squeezing extra value out of infrastructure.

Pros:

- Simple QoS controls can identity bandwidth hogs and restrict the traffic quickly

- Built for enterprises and large networks, SolarWinds NTA can support massive streams of data across multiple VLAN, subnets, and WANs

- Intuitive reporting allows for both technical and business-oriented reports to be generated with ease

- Uses drag and drop functionality to customize the look and feel of the product

- Supports a wide range of protocols to discover devices and measure traffic patterns

Cons:

- NTA is a highly detailed enterprise tool, and not designed for home users or small LANs

These reports and analytics include: latency, jitter, and packet loss.

Related post: SolarWinds NetFlow Traffic Analyzer Review

How do you configure your QoS?

Cable routers and switches that can be configured to prioritize protocols are usually accessed by router management software suites. The whole process of configuring your QoS preference is a pretty straightforward affair that involves:

- Logging into the application and connecting to the hub or switch through it

- Navigating to the QoS configuration menu

- Setting packet priority preferences

And just like that, media packets will be able to traverse networks smoothly. Hardcore network engineers can do all of the tasks listed above via command line configuration interfaces.

How are RTP packets prioritized?

QoS packet prioritization can be done using two main methods:

- Classification: This effective method identifies the packet types and assigns their priority by marking them. The identification can be done using ACLs (Access Control Lists), LAN implementations using CoS (Class of Service), or with the help of switches that use hardware-based QoS markings.

- Queuing: Queues are high-performance memory buffers found in routers and switches. The packets passing through them are held in dedicated memory areas as they wait to be sent on their way. When protocols, such as RTP are assigned a higher priority, they are moved to a dedicated queue that pushes the data on at a faster pace, thus reducing the chances of being dropped. The lower-priority queues aren’t afforded this luxury.

An important thing that needs to be remembered here is that a packet’s priority markings are only valid within the network it has been created in. Once it leaves the network, the owners of the recipient network will determine its new priority.

Thoughts to consider when prioritizing packets

Some thoughts and tips that can help when deciding how to prioritize packets include:

- It is generally a good idea to have the priority markings assigned by devices closest to the source of the data This ensures the packets travel across the entire network with the correct priority.

- The device of choice to mark incoming packets that should always be switches. This is because these devices can load-balance the traffic on the network and share the burden with other switches, thus reducing the burden on their CPUs.

- Incoming traffic is almost always greater than that which is headed in the opposite direction. ISP providers normally assign less bandwidth to their clients’ outgoing traffic, and it is there (on the outgoing network path) that QoS needs to be primarily applied.

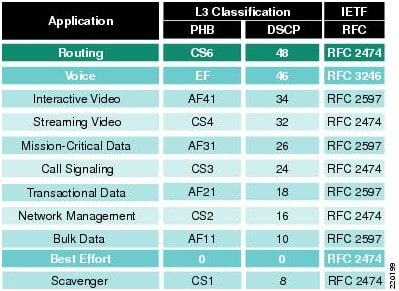

- Cisco has a recommendation on how packets should be marked as is shown in this diagram:

Finally, the success of a QoS implementation always depends on the quality of the policy that governs how packets are classified, marked, and queued. The policy must be carefully drafted for the QoS implementation to be a success.

What not to use QoS for

After reading about QoS it might appear to be a magic elixir that can cure all ailments that cause network congestion. Well, to a certain extent, it can make most RTP communications smoother and make it appear as though it has streamlined the traffic on a network. Unfortunately, it isn’t an all-around solution for every network problem.

QoS should never be used for the following purposes:

Increasing bandwidth

Although QoS helps to streamline the priority of RTP packets and make it look like the network suddenly increased its bandwidth, it should never be construed as such. QoS should never be used as a tool to “increase bandwidth” when all it does is utilize the existing resources a little more efficiently (and in favor of the RTP packets).

Instead, consider looking into caching of files to decrease the amount of data that comes and goes. If that doesn’t work, then it could mean the prescribed bandwidth limits have been reached. When a company reaches its broadband limits, the only viable thing to do is to go out and buy some more of it – not use QoS.

Unclogging the network

If rogue applications are left to run and they end up hogging a network’s bandwidth, implementing QoS is not the solution. While Skype calls might finally start to go through, QoS will not have addressed the root problem. Eventually, the rogue applications will swallow up whatever resources may be available, exhausting the benefits of QoS.

One solution that could work here would be to hunt down the resource-hogging applications and either shut them down or reschedule them to run during after hours.

Again, the whole purpose of configuring QoS on a network is to make sure streaming video and audio calls don’t lag (or even get dropped) due to a congested network. It is not a tool that can actually increase bandwidth. It can’t tunnel through a clogged network, either.

A good QoS implementation will improve the quality and speeds of mission-critical data by optimizing the allocated bandwidth and facilitating the packets’ tagging so they are identified and given their assigned priorities. It makes use of the available bandwidth; it doesn’t expand it.

QoS in Networking FAQs

What is the difference between QoS and Network Throttling?

Throttling, which is also known as policing, involves setting an overall limit for traffic throughput and dropping excess traffic. QoS is a method that prioritizes some traffic over others and uses queuing, thus maximizing bandwidth for some traffic at the expense of others.

What's the main role of DSCP in QoS?

The Differentiated Services Code Point (DSCP) appears in packet headers. It is a packet-level opportunity to request a priority from QoS management software on network devices. Network managers can choose to turn DSCP detection on or off on the device, so this value can be ignored in favor of a different QoS queuing method.

Can you explain traffic shaping in QoS?

Traffic shaping is a method used by QoS to get the best value from network capacity. All networks experience surges in demand and traditional capacity planning demands bandwidth provision at the peak level plus a margin of safety. QoS traffic shaping introduces slight delays on certain traffic to enable a network with less capacity than peak demand to cater to all traffic.

Image attributions:

- Feature image by John Carlisle on Unsplash

- Mixed network design – Wikimedia, public domain

- “Netflow Traffic Analyzer Summary” – screenshot taken on 28/05/2018

- “Cisco’s QoS Baseline Marking Recommendations” – Courtesy of Cisco Systems, Inc. Unauthorized use not permitted (Image captured on 28/05/2018)

Nicely explained. Thank you